Imaginary and complex numbers are handicapped by the name we gave them. “Imaginary” has obvious and bad connotations: it implies an object made up, perhaps not useful; “complex” similarly seems to argue the numbers are too hard to use. As with so much in math and physics, however, the names are historical, and complexity is context-dependent. In particular, imaginary numbers have an obvious reason for existence—the square root of a negative real number—and as I noted in an earlier post, complex numbers are incredibly useful. While it’s true that the algebra of complex numbers is a little trickier than “ordinary” algebra, it’s not phenomenally harder. The order of multiplication doesn’t matter, as it does with quaternions, Clifford numbers, or Grassmann numbers. In fact, for some applications like electrical engineering or the processing of signals, complex numbers turn out to be easier to use than real numbers! After all, if a particular type of math makes something simpler, it might still just be a tool, a convenience. While it’s true that sometimes complex numbers are mainly a convenience, because of their deep connection to geometry, they are as real as any other mathematical device.

The earliest history of complex numbers ties them to solving algebraic equations, like quadratic equations: (insert example here) However, mathematicians realized soon that they could be used to represent points on a plane. In fact, today is the birthday of mathematician Caspar Wessel who characterized the complex plane. Thanks to this discovery, we know that anything that can be represented by a two-dimensional mathematical object (coordinates) can also be written as a complex number. We can rotate objects in two dimensions (see the figure at left) and describe waves. While complex numbers aren’t strictly required for either of those operations, they make our mathematical lives easier by their existence.

I used to give a talk in graduate school called “Imaginary Numbers are Not Real”, a title and concept I borrowed from Stephen Gull, Anthony Lasenby, and Chris Doran. The talk and paper addressed Clifford algebras, arguing that every imaginary number in our math corresponds to a geometrical concept. I still agree with most of what I said back then, but I’ve changed my rhetoric a bit: I now say imaginary numbers are real, in the sense that they provide meaning to the results of real physics experiments. If the quantities they characterize exist in nature, I say complex numbers are as real as any other mathematical entity—not as a Platonic ideal, but as a true description of the Universe in which we live.

Quantum Mechanics is Complex

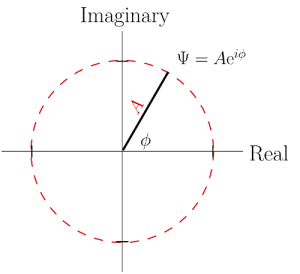

However, in quantum mechanics complex numbers aren’t just a convenience: they’re necessary. The fundamental equation in quantum physics—the Schrödinger equation (yup, named for the same dude as the cat)—has an imaginary number in it, and the solutions to the equation are inherently complex numbers. These solutions are called wavefunctions, since they are wavelike in character. Using the Euler method to write complex numbers, the wavefunction looks like this:![]()

where Ψ is the Greek letter “Psi” (often pronounced “sy” in English, but properly pronounced “psee”, with the “p” included) and φ (“fie” or “fee”) is the phase of the wavefunction. The symbol A is the magnitude of the wavefunction; the figure at right shows how to interpret all this graphically.

The wavefunction by itself isn’t an measurable quantity, but it contains measurable quantities. (Quantum theory experts, I’m obviously oversimplifying, but please bear with me!) The probability of the outcome of a particular measurement is found by multiplying the wavefunction by its complex conjugate:![]()

In other words, the probability only depends on the amplitude, not the phase. So why bother with complex numbers? If the phase isn’t directly measurable, what does it even mean?

The answer arrives if we have multiple particles, or if a particle can follow more than one path. Think of it this way: the phase is like the second hand on a clock, sweeping around the clock face once every minute. There is no absolute measure of time: we measure time relative to “universal time” (formerly “Greenwich mean time”), but that’s arbitrary, selected for historical reasons when England had the most powerful military force in the world. My clock shows one time, while my friends in London, Calgary, San Francisco, and Kyoto show a different time—but our clocks measure the same time intervals. If something takes 5 seconds in Richmond, it will also take 5 seconds in Humptulips, Washington, even though it’s three hours “earlier” there by the clock. In other words, the duration of something happening is the same, wherever it’s measured. (Relativity complicates things when one observer is moving close to the speed of light relative to the other, but we don’t need to worry about that here.)

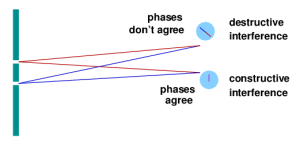

For quantum physics, think of the phase like that second hand: while the absolute phase is a meaningless concept, the relative phase between two particles or between two possible paths that can be followed by one particle is a measurable quantity. Consider the classic two-slit experiment in quantum mechanics: a single particle (e.g., a photon) is sent toward a barrier with two openings in it. From the quantum point of view, the photon can follow any number of trajectories, as dictated by its wavefunction. Each trajectory will accumulate a certain phase as the photon travels, but since it can pass through either opening, some paths will be preferred over others. To figure this out, you calculate what the phase difference is between the two possible trajectories to land at a particular spot on a detection screen. (Phase also wraps around, just like the second hand, so an elapsed time of 60 seconds or 120 seconds brings the second hand back to its starting point. Similarly, a 360º phase difference is the same as 0°.) If the phase difference is zero, then the photon is likely to land at that spot. If the phase difference is opposite, then the photon won’t land there—the probability is zero. Phase differences between those extremes mean intermediate probabilities. Putting all these possibilities together reveals an interference pattern: points of high probability are points of maximum constructive interference, and points of zero probability are places where destructive interference occurs.

This way of thinking (known as path integration) comes from Richard Feynman, and I’ve been planning to write a post about it for some time, since there’s a lot more to be said. We won’t go any deeper into it right now, since I want to return to my main point about imaginary numbers…and another interesting experiment.

Tunneling Time is Complex

Particles can be confined by mutual attraction, like two atoms in a molecule or an electron in an atom. The electric attraction presents a barrier to the electron escaping, but if you calculate it using quantum mechanics, a little bit of the wavefunction of the electron might extend into the barrier and beyond. This means there is a small probability the electron can escape, a phenomenon known as quantum tunneling. Tunneling by electrons out of atoms is pretty rare in isolation, but it can be induced by lowering the barrier. (Tunneling is also responsible for the decay of unstable atomic nuclei, but that’s a topic for another day.)

A recent experiment, which I covered for Ars Technica, involves inducing an electron to tunnel out of a molecule. The researchers did this in order to time how long tunneling takes—which turns out to be ridiculously short, but still measurable! (The time is of the order of hundreds of attoseconds, or 10-16 seconds.) However, to calculate this time theoretically involves looking at the behavior of the wavefunction inside the barrier. To quote from my Ars Technica article:

Once out of the atom, the electron’s motion can be described in terms of a trajectory rather than a wavefunction. “Inside” the barrier, however, things are complex—in both the conceptual and mathematical senses. The quantum mechanical equivalent of the electron’s velocity is described by a complex number: the sum of a real and imaginary number.

Recall that imaginary numbers can be found by taking the square root of negative numbers. Now consider that the value of a quantum wavefunction at each point in space is a complex number. Since the velocity is complex, the “time” to traverse the barrier must also be complex. That makes it rather difficult to know what to expect when you actually measure the time.

Shafir and his co-workers found that their measurements matched the real portion of the complex time variable predicted by quantum mechanics. This number doesn’t agree with the “classical” time, which is calculated by treating the electron as a particle obeying Newtonian physics. In other words, even though the electron behaves as a particle when it’s free, the full understanding of its motion still requires use of quantum physics.

I spent a few hours last night calculating the tunneling time through a simple barrier, an easier problem than the one these researchers tackled. I may blog what I found later (if anyone is interested), since I haven’t seen anyone do that particular calculation, but as with the the molecular tunneling problem, I found a complex value for the velocity of the particle tunneling through—and a corresponding complex time.

Imaginary numbers are real.

11 responses to “Imaginary Numbers are Real”

[…] Imaginary Numbers are Real « Galileo’s Pendulum […]

Very interested in your “tunneling time through a simple barrier” calculations. Please blog more on “complex time” in QM. What’s the relationship, if any, to Hawking’s (et al) “imaginary time” in BB cosmology?

The complex time in quantum mechanics is a heuristic way of getting at observable quantities when the classical velocity doesn’t exist. When the particle enters the forbidden region (the barrier), its velocity becomes complex – but the distance it travels is a real observable number. That means the time must be complex. The research paper I cite uses Feynman’s path integral technique, but I was trying to reproduce their results using more another method, to see if it could be done.

On the other hand, Hawking’s imaginary time is a geometrical construction to turn the singularity at the beginning of the Universe into a smooth surface. He’s using some methods drawn from quantum field theory, but I don’t think his tricks are particularly satisfying – though in his defense, I think he’s abandoned that avenue of investigation.

For the experts, if I understand Hawking’s work properly (he is *not* a clear thinker), he’s performing a Wick rotation to turn the real-number-valued time into an imaginary number, making the Lorentz spacetime of relativity into four-dimensional Euclidean space.

[…] which is a very good idea.) One lab turned out to be a major center of research using scanning tunneling electron microscopes (STMs), which use electrons to map the distribution of electric charge on the […]

[…] Imaginary Numbers are Real by Matthew Francis […]

[…] Imaginary Numbers are Real by Matthew Francis […]

[…] Imaginary Numbers are Real by Matthew Francis […]

[…] The new experiment is exciting because it didn’t exploit electron spins. Instead, the researchers trapped very cold atoms in an optical lattice: an arrangement of crossed laser beams that produce standing waves of crests and troughs, with the atoms sitting in the troughs. In other words, it’s kind of like a solid in the sense that the atoms are ordered, but they aren’t electrically bound to each other as they would be in a real material. However, if the troughs are sufficiently shallow, the atoms can move from one to another, via the process of quantum tunneling. […]

[…] and engineering, as I’ve noted in several posts in the past. (See in particular “Imaginary Numbers are Real“, which includes a really cool quantum mechanics application.) Instead, let’s focus on […]

[…] a regular real number: it’s the imaginary number, which we often write as i. Of course, imaginary numbers aren’t any more “imaginary” than many other useful mathematical constructs; my feeling is that, if something in math can be […]

[…] which I’ve been musing on lately. I think my latest bout of musings were triggered by reading this, which I read via a chain of links that I can’t remember now, but would have included […]